like “NVIDIA OctoAI acquisition,” “OctoAI generative AI platform,” “NVIDIA AI optimization tools,” “enterprise AI model efficiency,” and “OctoAI NVIDIA integration” naturally, while structuring with clear headings, subheadings, and visuals, this piece is optimized for search intent around AI advancements in 2025. Let’s dive into how this acquisition is reshaping the AI landscape.

Understanding the NVIDIA OctoAI Acquisition: A Game-Changer for AI Infrastructure

In September 2024, NVIDIA acquired OctoAI, a Seattle-based startup specializing in efficient generative AI tools, for an estimated $165-250 million. This deal, NVIDIA’s fifth acquisition that year, underscores the chip giant’s strategy to dominate the end-to-end generative AI stack, from hardware to software optimization. By 2025, the integration has progressed, but not without disruptions—OctoAI’s standalone services are shutting down, forcing users to migrate to NVIDIA’s ecosystem.

The acquisition aligns with NVIDIA’s broader 2025 moves, including the purchase of AI coding startup Solver in September and synthetic data firm Gretel in March, enhancing its AI programming and data capabilities. Industry experts view this as a talent and tech grab, with OctoAI’s team bringing expertise in model efficiency to NVIDIA’s arsenal. For enterprises, this means faster, more cost-effective AI deployments, but it also highlights the consolidation trend in AI, where smaller players like OctoAI are absorbed to fuel giants’ growth.

Nvidia Strengthens Generative AI Leadership with $250 Million …

Exploring OctoAI’s Generative AI Platform: Now Enhanced by NVIDIA

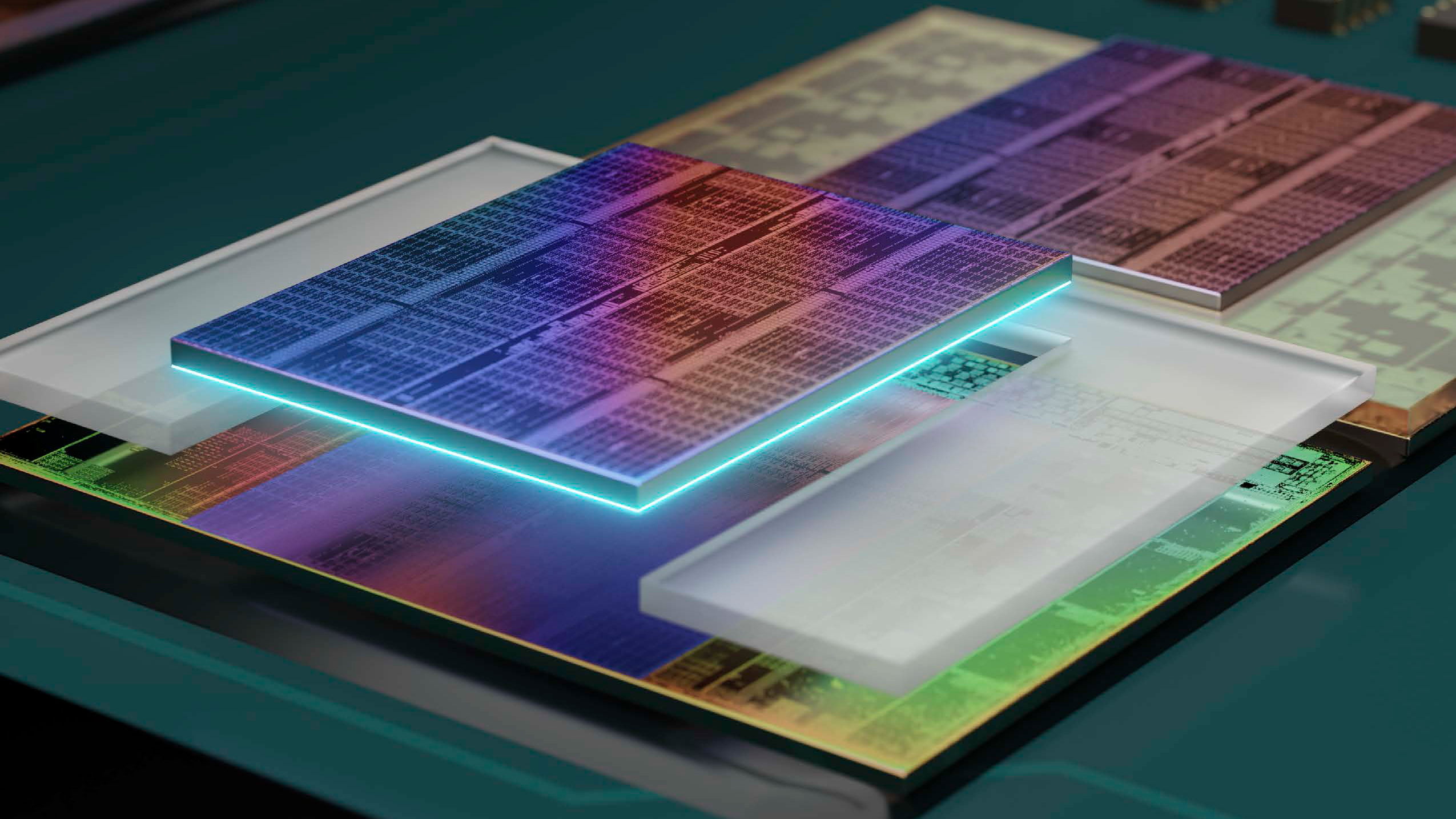

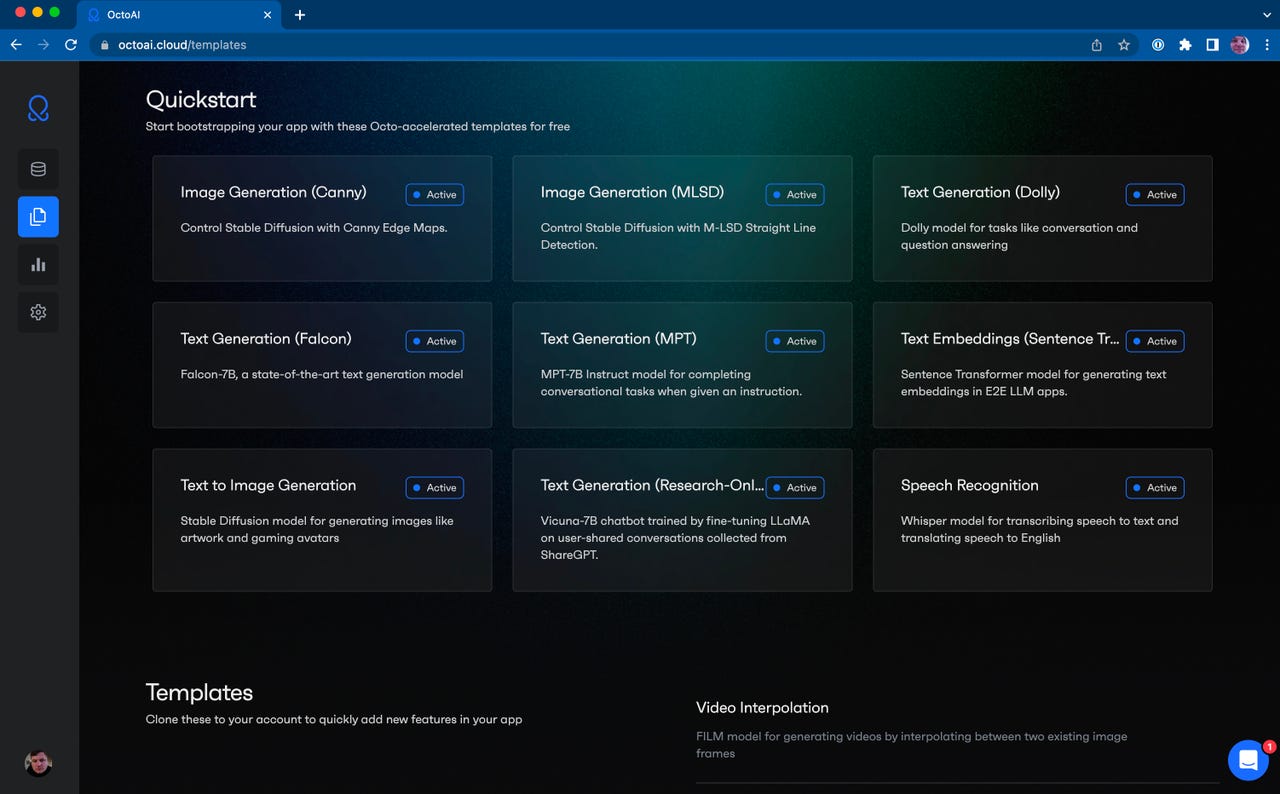

Pre-acquisition, OctoAI’s platform stood out for its ability to optimize and deploy generative AI models efficiently across hardware like NVIDIA GPUs. It offered tools for image and text generation, model customization, and low-latency inference, making it ideal for developers building apps without deep infrastructure expertise. Features included quickstart templates for Stable Diffusion, Falcon, and MPT models, allowing seamless integration into workflows.

Post-acquisition, these capabilities are being folded into NVIDIA’s ecosystem, powering tools like TensorRT for accelerated inference. In 2025, users report improved performance in generative tasks, such as creating high-quality images or text with reduced costs—up to 50% efficiency gains in some benchmarks. However, the platform’s shutdown in late 2024 has prompted migrations, with alternatives like eesel AI emerging for those seeking similar ease-of-use.

Real-world applications shine in sectors like e-commerce, where OctoAI’s tech (now NVIDIA-enhanced) enables personalized content generation at scale. For instance, integrating with NVIDIA’s NeMo framework allows fine-tuning models for specific use cases, blending OctoAI’s user-friendly interface with NVIDIA’s hardware acceleration.

Serving Generative AI just got a lot easier with OctoML’s OctoAI …

NVIDIA’s AI Optimization Tools: Integrating OctoAI for Superior Performance

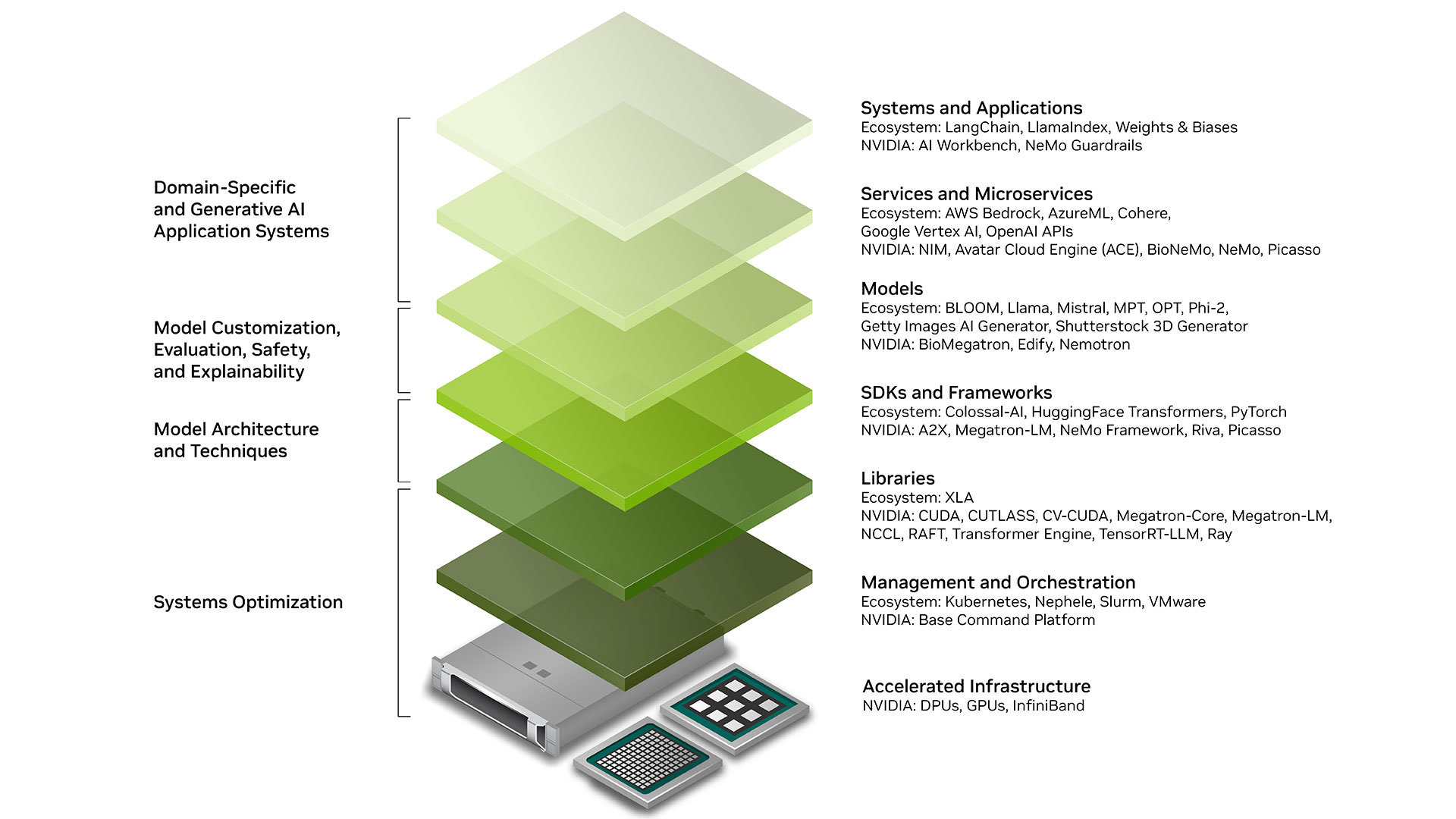

NVIDIA’s suite of AI optimization tools, including TensorRT, CUDA, and Triton Inference Server, has long been the gold standard for speeding up model deployments. The OctoAI acquisition adds layers of automation, such as self-optimizing compute services that adapt models to hardware in real-time.

In 2025, this integration means developers can achieve up to 10x faster inference times on NVIDIA GPUs by leveraging OctoAI’s compression and quantization techniques alongside TensorRT. Compared to alternatives like ONNX Runtime, NVIDIA’s tools offer better ecosystem compatibility, though they require more NVIDIA-specific knowledge.

A technical deep dive: OctoAI’s algorithms focus on reducing model size without accuracy loss, using methods like pruning and knowledge distillation. When paired with NVIDIA’s CUDA, this results in lower latency—ideal for edge AI applications. Pros include scalability; cons involve potential vendor lock-in post-shutdown.

To get started: Install NVIDIA’s AI Workbench, import OctoAI-optimized models, and use TensorRT for deployment. Benchmarks from 2025 show 30-40% cost savings in cloud inference.

Generative AI | NVIDIA Developer

Boosting Enterprise AI Model Efficiency: Lessons from OctoAI and NVIDIA

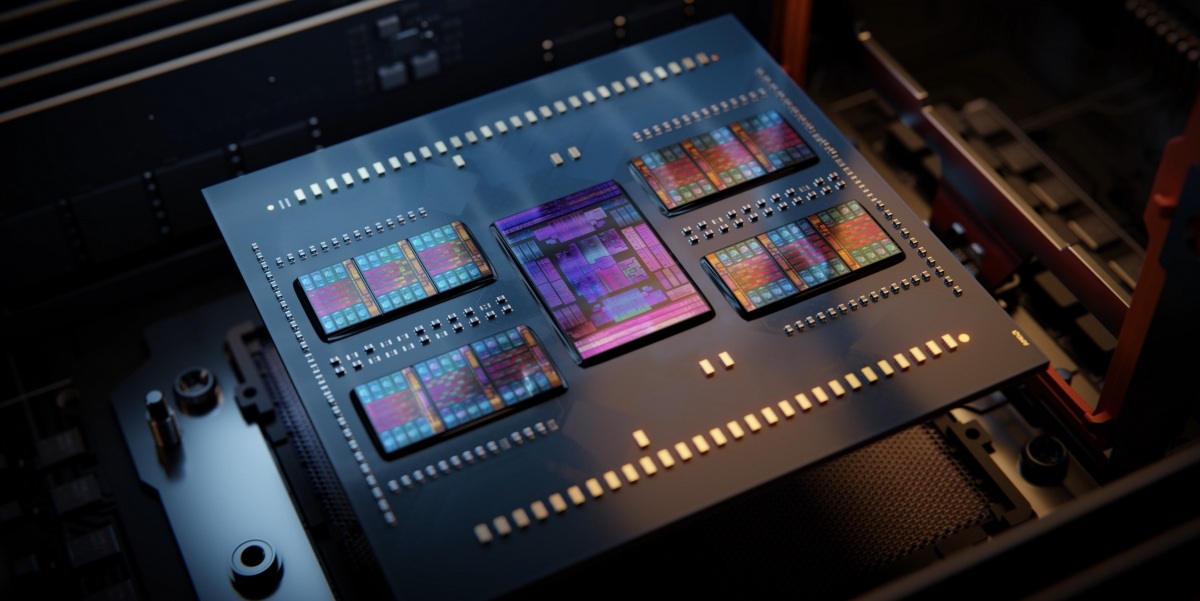

Enterprise AI demands efficiency to handle massive datasets and real-time decisions. OctoAI’s platform excelled here by optimizing models for cost-performance balance, now amplified by NVIDIA’s hardware like the Hopper architecture. In 2025, this translates to metrics like reduced latency (under 100ms for inference) and lower energy consumption, addressing sustainability concerns in AI.

Key challenges include scaling large language models (LLMs) without skyrocketing costs. NVIDIA-OctoAI integration tackles this through efficient inference engines, enabling enterprises in healthcare and finance to deploy AI compliantly. For example, in medical imaging, optimized models cut processing time by 60%, improving diagnostics.

Industry impact: Sectors like manufacturing see 20-30% productivity boosts via predictive maintenance. Trends point to hybrid AI setups, blending on-premise NVIDIA DGX systems with cloud-optimized OctoAI tech.

Seamless OctoAI NVIDIA Integration: Architecture and Migration Strategies

Post-acquisition, the roadmap includes embedding OctoAI’s optimization engine into NVIDIA’s AI Enterprise suite.

Step-by-step: 1) Assess models using OctoAI’s tools (pre-shutdown archives). 2) Migrate to NVIDIA TensorRT-LLM for inference. 3) Implement hybrid setups with Kubernetes for scalability. Risks include service sunsets in October 2024, but NVIDIA provides migration guides to alternatives like NIM.

Benefits: Enhanced compatibility for multi-cloud environments, with 2025 updates focusing on edge integration. For DevOps teams, this means unified monitoring via NVIDIA’s Base Command Platform.

OctoAI joins Nvidia’s AI expansion | Digital Watch Observatory

Challenges, Future Outlook, and Why This Matters in 2025

While the acquisition promises innovation, challenges like service shutdowns and migration hurdles persist. Users on platforms like X (formerly Twitter) share mixed experiences, from excitement over efficiency gains to concerns about ecosystem lock-in.

Looking ahead, expect deeper integrations in NVIDIA’s 2025 roadmap, potentially revolutionizing AI factories for revenue generation. For enterprises, this means prioritizing adaptable AI strategies to stay competitive.

In summary, the NVIDIA OctoAI acquisition is a pivotal step toward efficient, scalable AI. By leveraging these insights, businesses can optimize their AI journeys—driving growth while navigating the evolving tech landscape. For more on AI trends, explore NVIDIA’s developer resources or consult with experts for tailored implementations.